🫀MLOPs recap, part 1

As requested by our readers, we put together a quick recap of our ongoing series about MLOps. As the proverb says: Repetition is the mother of learning ;) Subscribe to read every article of this series for as low as $24.50/per year.

Let’s have some useful intro about the whole category first:

💡 ML Concept of the Day: What is MLOps?

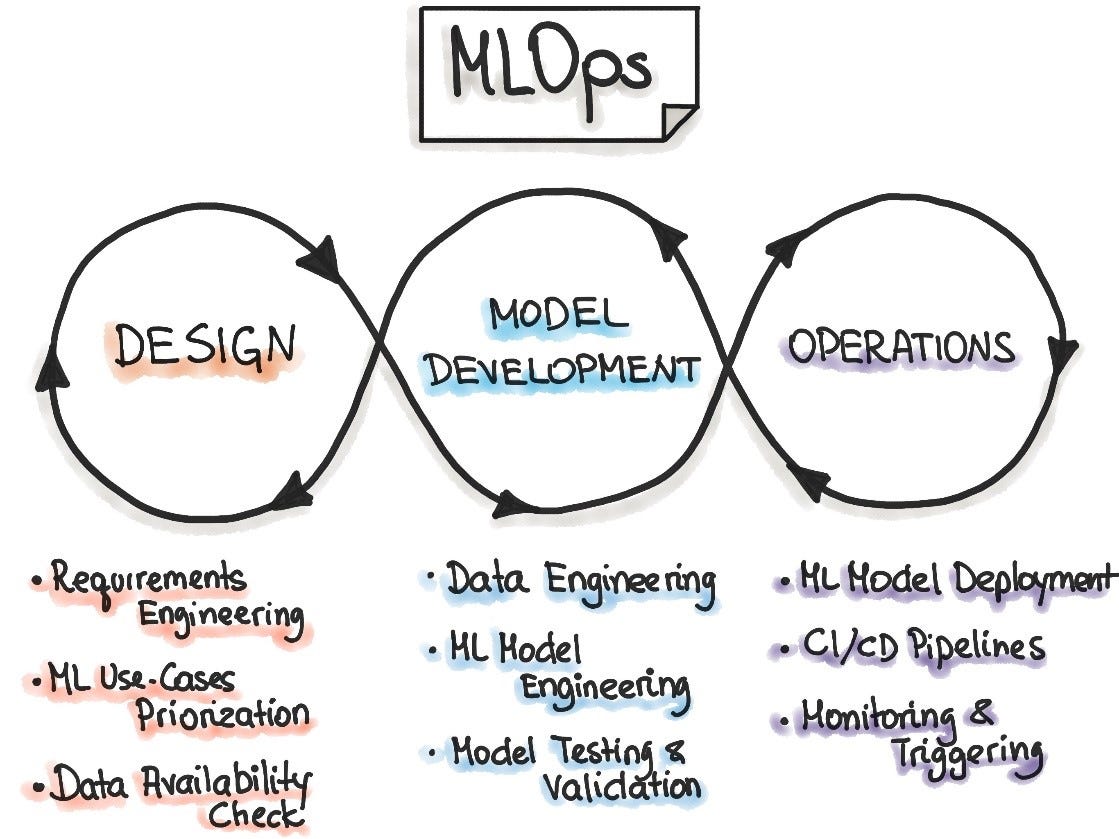

One of the challenges to understanding MLOps is that the term itself is used very loosely in the ML community. In general, we should think about MLOps as an extension of DevOps methodologies but optimized for the lifecycle of ML applications. This definition makes perfect sense if we consider how fundamentally different the lifecycle of ML applications is comparing to traditional software programs. For starters, ML applications are composed of both models and data, and they include stages such as training or hyperparameter optimization that have no equivalence in traditional software applications.

Just like DevOps, MLOps looks to manage the different stages of the lifecycle of ML applications. More specifically, MLOps encompasses diverse areas such as data/model versioning, continuous integration, model monitoring, model testing, and many others. In no time, MLOps evolved from a set of best practices into a holistic approach to ML lifecycle management.

Let’s see what else we’ve got for you:

In Edge#139, we cover TFX, a TensorFlow-based architecture created by Google to manage machine learning models; +MLflow, a platform for end-to-end machine learning lifecycle management.

In Edge#141, we discuss Model Monitoring; +Google’s research paper about the building blocks of interpretability; +a few ML monitoring platforms: Arize AI, Fiddler, WhyLabs, Neptune AI.

In Edge#143, we offer you the recap of articles dedicated to feature stores. Why did they become a crucial part of the MLOps stack?

In Edge#145 (you can read it without a subscription), we discuss model observability and its difference from model monitoring; +Manifold, an architecture for debugging ML models; +Arize AI that enables the foundation for ML observability.

In Edge#147, we explain what model serving is; +the TensorFlow serving paper; +TorchServe, a super simple serving framework for PyTorch.

In Edge#151 we discuss Model Packaging; +Typed Features at LinkedIn; +ONNX, a key framework for ML Interoperability.

We will continue covering MLOPs dimensions in January. Stay tuned! Don’t forget to subscribe to get access to all fresh articles and the full archive. It’s only $24.50/year. Some spend on coffee more than that in a few days ;)

MLOps don’t sound enticing? Find a topic that matches your interests:

This is just a small part of what we’ve covered, and there is SO MUCH interesting ahead, as the AI&ML pace of development has speeded up tremendously.

Thank you for reading and spreading the word. You are awesome!